ContextGem is a free, open-source LLM framework that makes it radically easier to extract structured data and insights from documents — with minimal code.

Most popular LLM frameworks for extracting structured data from documents require extensive boilerplate code to extract even basic information. This significantly increases development time and complexity.

ContextGem addresses this challenge by providing a flexible, intuitive framework that extracts structured data and insights from documents with minimal effort. Complex, most time-consuming parts are handled with powerful abstractions, eliminating boilerplate code and reducing development overhead.

Read more on the project motivation in the documentation.

| Built-in abstractions | ContextGem | Other LLM frameworks* |

|---|---|---|

| Automated dynamic prompts | 🟢 | ◯ |

| Automated data modelling and validators | 🟢 | ◯ |

| Precise granular reference mapping (paragraphs & sentences) | 🟢 | ◯ |

| Justifications (reasoning backing the extraction) | 🟢 | ◯ |

| Neural segmentation (SaT) | 🟢 | ◯ |

| Multilingual support (I/O without prompting) | 🟢 | ◯ |

| Single, unified extraction pipeline (declarative, reusable, fully serializable) | 🟢 | 🟡 |

| Grouped LLMs with role-specific tasks | 🟢 | 🟡 |

| Nested context extraction | 🟢 | 🟡 |

| Unified, fully serializable results storage model (document) | 🟢 | 🟡 |

| Extraction task calibration with examples | 🟢 | 🟡 |

| Built-in concurrent I/O processing | 🟢 | 🟡 |

| Automated usage & costs tracking | 🟢 | 🟡 |

| Fallback and retry logic | 🟢 | 🟢 |

| Multiple LLM providers | 🟢 | 🟢 |

🟢 - fully supported - no additional setup required

🟡 - partially supported - requires additional setup

◯ - not supported - requires custom logic

* See descriptions of ContextGem abstractions and comparisons of specific implementation examples using ContextGem and other popular open-source LLM frameworks.

- Extract structured data from documents (text, images)

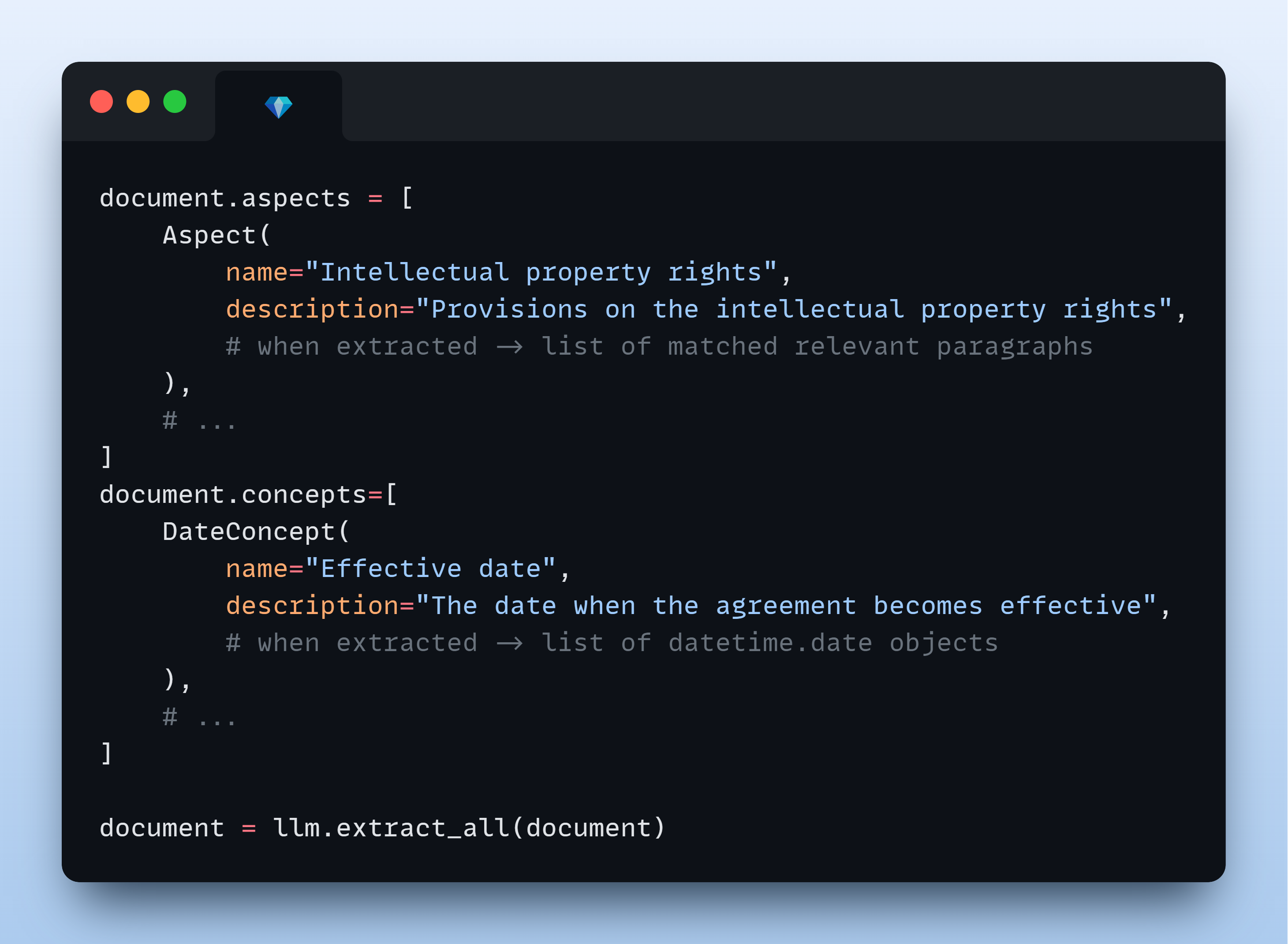

- Identify and analyze key aspects (topics, themes, categories) within documents

- Extract specific concepts (entities, facts, conclusions, assessments) from documents

- Build complex extraction workflows through a simple, intuitive API

- Create multi-level extraction pipelines (aspects containing concepts, hierarchical aspects)

pip install -U contextgem# Quick Start Example - Extracting anomalies from a document, with source references and justifications

import os

from contextgem import Document, DocumentLLM, StringConcept

# Sample document text (shortened for brevity)

doc = Document(

raw_text=(

"Consultancy Agreement\n"

"This agreement between Company A (Supplier) and Company B (Customer)...\n"

"The term of the agreement is 1 year from the Effective Date...\n"

"The Supplier shall provide consultancy services as described in Annex 2...\n"

"The Customer shall pay the Supplier within 30 calendar days of receiving an invoice...\n"

"The purple elephant danced gracefully on the moon while eating ice cream.\n" # 💎 anomaly

"This agreement is governed by the laws of Norway...\n"

),

)

# Attach a document-level concept

doc.concepts = [

StringConcept(

name="Anomalies", # in longer contexts, this concept is hard to capture with RAG

description="Anomalies in the document",

add_references=True,

reference_depth="sentences",

add_justifications=True,

justification_depth="brief",

# see the docs for more configuration options

)

# add more concepts to the document, if needed

# see the docs for available concepts: StringConcept, JsonObjectConcept, etc.

]

# Or use `doc.add_concepts([...])`

# Define an LLM for extracting information from the document

llm = DocumentLLM(

model="openai/gpt-4o-mini", # or another provider/LLM

api_key=os.environ.get(

"CONTEXTGEM_OPENAI_API_KEY"

), # your API key for the LLM provider

# see the docs for more configuration options

)

# Extract information from the document

doc = llm.extract_all(doc) # or use async version `await llm.extract_all_async(doc)`

# Access extracted information in the document object

print(

doc.concepts[0].extracted_items

) # extracted items with references & justifications

# or `doc.get_concept_by_name("Anomalies").extracted_items`See more examples in the documentation:

- Aspect Extraction from Document

- Extracting Aspect with Sub-Aspects

- Concept Extraction from Aspect

- Concept Extraction from Document (text)

- Concept Extraction from Document (vision)

- LLM chat interface

- Extracting Aspects Containing Concepts

- Extracting Aspects and Concepts from a Document

- Using a Multi-LLM Pipeline to Extract Data from Several Documents

To create a ContextGem document for LLM analysis, you can either pass raw text directly, or use built-in converters that handle various file formats.

ContextGem provides built-in converter to easily transform DOCX files into LLM-ready data.

- Extracts information that other open-source tools often do not capture: misaligned tables, comments, footnotes, textboxes, headers/footers, and embedded images

- Preserves document structure with rich metadata for improved LLM analysis

# Using ContextGem's DocxConverter

from contextgem import DocxConverter

converter = DocxConverter()

# Convert a DOCX file to an LLM-ready ContextGem Document

# from path

document = converter.convert("path/to/document.docx")

# or from file object

with open("path/to/document.docx", "rb") as docx_file_object:

document = converter.convert(docx_file_object)

# You can also use it as a standalone text extractor

docx_text = converter.convert_to_text_format(

"path/to/document.docx",

output_format="markdown", # or "raw"

)Learn more about DOCX converter features in the documentation.

ContextGem leverages LLMs' long context windows to deliver superior extraction accuracy from individual documents. Unlike RAG approaches that often struggle with complex concepts and nuanced insights, ContextGem capitalizes on continuously expanding context capacity, evolving LLM capabilities, and decreasing costs. This focused approach enables direct information extraction from complete documents, eliminating retrieval inconsistencies while optimizing for in-depth single-document analysis. While this delivers higher accuracy for individual documents, ContextGem does not currently support cross-document querying or corpus-wide retrieval - for these use cases, modern RAG systems (e.g., LlamaIndex, Haystack) remain more appropriate.

Read more on how ContextGem works in the documentation.

ContextGem supports both cloud-based and local LLMs through LiteLLM integration:

- Cloud LLMs: OpenAI, Anthropic, Google, Azure OpenAI, and more

- Local LLMs: Run models locally using providers like Ollama, LM Studio, etc.

- Model Architectures: Works with both reasoning/CoT-capable (e.g. o4-mini) and non-reasoning models (e.g. gpt-4.1)

- Simple API: Unified interface for all LLMs with easy provider switching

ContextGem documentation offers guidance on optimization strategies to maximize performance, minimize costs, and enhance extraction accuracy:

- Optimizing for Accuracy

- Optimizing for Speed

- Optimizing for Cost

- Dealing with Long Documents

- Choosing the Right LLM(s)

ContextGem allows you to save and load Document objects, pipelines, and LLM configurations with built-in serialization methods:

- Save processed documents to avoid repeating expensive LLM calls

- Transfer extraction results between systems

- Persist pipeline and LLM configurations for later reuse

Learn more about serialization options in the documentation.

Full documentation is available at contextgem.dev.

A raw text version of the full documentation is available at docs/docs-raw-for-llm.txt. This file is automatically generated and contains all documentation in a format optimized for LLM ingestion (e.g. for Q&A).

If you have a feature request or a bug report, feel free to open an issue on GitHub. If you'd like to discuss a topic or get general advice on using ContextGem for your project, start a thread in GitHub Discussions.

We welcome contributions from the community - whether it's fixing a typo or developing a completely new feature! To get started, please check out our Contributor Guidelines.

This project is automatically scanned for security vulnerabilities using CodeQL. We also use Snyk as needed for supplementary dependency checks.

See SECURITY file for details.

ContextGem relies on these excellent open-source packages:

- pydantic: The gold standard for data validation

- Jinja2: Fast, expressive template engine that powers our dynamic prompt rendering

- litellm: Unified interface to multiple LLM providers with seamless provider switching

- wtpsplit: State-of-the-art text segmentation tool

- loguru: Simple yet powerful logging that enhances debugging and observability

- python-ulid: Efficient ULID generation

- PyTorch: Industry-standard machine learning framework

- aiolimiter: Powerful rate limiting for async operations

ContextGem is just getting started, and your support means the world to us! If you find ContextGem useful, the best way to help is by sharing it with others and giving the project a ⭐. Your feedback and contributions are what make this project grow!

This project is licensed under the Apache 2.0 License - see the LICENSE and NOTICE files for details.

Copyright © 2025 Shcherbak AI AS, an AI engineering company building tools for AI/ML/NLP developers.

Shcherbak AI is now part of Microsoft for Startups.

Connect with us on LinkedIn for questions or collaboration ideas.

Built with ❤️ in Oslo, Norway.